Enterprise DevOps: 5 Keys to Success with DevOps at Scale

After getting a taste of DevOps’ benefits, enterprises naturally seek to widen its adoption. However, the tooling and processes that work for small-scale use cases often fall short when teams try to scale DevOps efforts. You must support all your different teams, toolsets, applications, processes, workflows, release cycles and pipelines — both legacy and cloud native. Otherwise, you can end up with a haphazard mishmash of automation silos or DevOps tools and processes, with widely varying degrees of quality, security, velocity, and — ultimately — success.

And success is critical, since software now underpins and facilitates virtually all business processes. DevOps — with its iterative, collaborative approach to application development and delivery — sits at the center of this new value chain. Implementing it at scale requires the right structure, processes and tools.

Five key principles for Enterprise DevOps success

A pioneer and leader in enterprise DevOps, JFrog knows what it takes to scale DevOps effectively across an organization. With more than 5,800 customers, including many of the world’s largest enterprises, across all verticals, we know a thing or two about DevOps at scale. We’ve partnered with these huge organizations as they embrace DevOps — and now, cloud-native modernization — to deliver high-quality software frequently and at scale. Here are five key principles our customers have put into practice when scaling DevOps in the enterprise.

1 — Central management of end-to-end DevOps processes AND their output

It’s essential to have a DevOps platform that allows you to manage from one central location not just your end-to-end delivery processes, but also the outcomes of these processes. If you think about it, builds/artifacts are the building blocks of software, and what flows through your CI/CD pipelines. To enable DevOps at scale, you must have a solution that allows you to manage both your end-to-end automation workflows and orchestration, as well as the build outputs of these processes, from a single solution.

With centralized management and a unified experience, you get clear visibility and a single source of truth for your entire SDLC and all your software assets, in one place. This should include management of binaries, container images, CI/CD pipelines, security and compliance, and software distribution to last-mile deployments across runtime environments, edges, and ”things.”

Currently, many CI/CD tools let you manage either processes or outcomes, but not both, and do not support all types of binaries and technologies either. Your DevOps platform should automate and orchestrate all of your point tools, processes, package types, and environments, with support for all technology stacks and artifacts, so you can easily plug in your existing toolset and even legacy scripts to be managed by a single, end-to-end DevOps platform.

By managing both delivery processes, and delivery assets and outputs from a single, end-to-end DevOps solution, and not having to “context switch” between disparate tools, you’ll be able to:

- Ensure consistency and traceability of all your artifacts throughout the software lifecycle, as they flow through your pipelines from development to production. This will give you a single source of truth for your entire DevOps process, speed up software delivery, and improve code quality, security, and governance.

- Have a universal repository for different types of binaries, container images, environments, point tools and more.

- Centrally manage security and ensure compliance, across all tools, processes, artifacts and repositories, including third party ones, from code to production.

- Have full visibility across your entire pipeline and your entire organization. No more siloed processes or snowflake configurations. With an end-to-end, unified experience in one platform, you have shared visibility and can take action at any point over your dependency downloads, repositories, deployments, builds, pipelines, and releases.

2 — Secured from the start — with built-in DevSecOps and ‘shift left’ capabilities

Enterprise DevOps must incorporate security and compliance checks across the entire software lifecycle — from development to deployment to production. Anywhere between 60 percent and 80 percent of application code is made up of third-party open source components. Using OSS dependencies as part of our applications greatly accelerates developer time-to-value and productivity by re-using existing components available in the ecosystem. However, these dependencies often contain security vulnerabilities and misconfigurations, license compliance issues, or other governance risks.

Enterprises today must manage unprecedented software growth. They’re producing more and more artifacts and applications — all using OSS components. In addition, the continued adoption of microservices-based, cloud-native applications further widens the attack surface due to the myriad of connected services, and to the fact that each container image can contain dozens of layers with hundreds of OSS dependencies.

This scenario gets compounded by the pressing need to patch these vulnerabilities more and more quickly, to try and stay a step ahead of the bad guys, and by the fact that for every 200 developers, the typical enterprise has only one security analyst.

Trying to tack on security testing at the end of the software development lifecycle creates a bottleneck and slows down delivery. Because security testing and scanning can be a pain, they tend to be pushed towards the end of the process, when they become a bottleneck.

The solution?

- Security and compliance must be first-class citizens, enabled by default, as an integral part of your chosen DevOps platform. No more tool/context switching, or remembering to run a test or initiate a scan.

- Security must be universal, meaning it supports all types of binaries, including cloud-native artifacts such as container images, and is tightly integrated throughout the artifact lifecycle and CI/CD pipeline.

- Deep recursive, scanning is required across all dependencies, including container images — from the application layer through the OS layer. Some tools may not be able to automatically scan and identify the granular dependency tree all the way to the OS level without some serious heavy lifting on the developer side. Beware of these types of solutions. You want to make sure scanning is done automatically, by default, all the way to the core- particularly as more applications become containerized- with each image having multiple layers.

- Scanning must be continuous and automated — at the DB level — across all repositories and production instances. Make sure that your scanning isn’t just limited to security scanning that is triggered by a pipeline. This is because many dependencies may already be in ‘the system’ and across existing applications/builds. Scanning at the DB level ensures that if new vulnerabilities become known, you’re alerted to these and can patch them — even when addressing older applications or re-usable packages, that may not be part of an ‘active’ current CI/CD delivery pipeline.

In addition to DB-level continuous scanning, you should — of course — be able to trigger security scanning and security gates as part of your CI/CD automation- as another validation stage.

- Enable “shift left” –Your application security solution must have integrations with your IDE, so that you can identify and patch vulnerabilities as early as possible in the process — while you’re developing the application. The sooner you catch these vulnerabilities the cheaper it is to fix them — rather than waiting until the code is ready to be built, let alone shipped to production.

- Set up governance rules for security and compliance policies, with the flexibility to adjust the scope of enforcement and the follow-up actions depending on the software development stage and your company’s DevSecOps rollout plan. Your policies may be more or less strict depending on your teams, applications, use cases, DevSecOps maturation, and risk. For example, you could choose to simply send an alert to the developer or security analyst if certain high-severity CVEs are identified. Alternatively, you can automatically fail a build, fail a deployment or the creation of a Release BOM, or even go as far as preventing the initial download and use of certain dependencies that have specific vulnerabilities or license compliance issues.

3 — Future proof with cloud native: the modernization imperative

As you modernize your applications to take advantage of modern cloud-native patterns and technologies like Kubernetes, remember that you still need to be able to update your legacy applications- and that not all your applications are containerized microservices (yet).

An enterprise DevOps platform should support both cloud-native and legacy applications, so that you can manage the entire lifecycle for either type of application. This includes their binaries, CI/CD processes, security scanning, and more — without having to switch to a separate tool.

Similarly, your DevOps platform has to be hybrid and multi-cloud, so that you can both consume it and use it to manage delivery pipelines across mixed environments of on-prem, private and public cloud, multi-cloud, and edge infrastructure.

4 — Pipeline as code

The ability to define pipelines as code increases developer productivity and helps to scale DevOps efforts. You can store pipeline definitions in your source control, and this makes them shareable (so you can collaborate with your team), versionable, reusable, auditable, and reproducible.

This eliminates redundant work among developers (so that not every team needs to re-invent the wheel to create their CI/CD automation), and also allows you to standardize and use vetted automation processes across the organization.

Furthermore, it also enables your teams to continuously evolve your delivery pipeline like a product — hardening, improving, and enhancing your processes with each consequent version.

Let’s look at the benefits and some best practices for Pipelines-as-Code in more detail:

- Re-use and standardization across teams: Pipeline-as-code lets you model and define the building blocks that DevOps teams need to standardize for their workflows and processes across the organization. This has two main benefits:

-

- Increased speed and developer productivity due to the elimination of redundant work. Rather than having every team build their pipelines from scratch, creating their own processes and policies every time, Pipelines-as-code allows you to re-use and share automation building blocks — including their objects, processes, secrets, resources, configurations, policies, security tests, conditional execution, and more.

- Improve quality and governance due to the ability to standardize on approved, hardened, processes that are consistent across the organization. These efforts eliminate snowflake configurations, automation silos, disparate processes and error-prone scripts that can introduce drift and quality concerns.

- Use parameters in your pipelines’ code. Remember that in order to allow different teams to leverage the same pipeline as code, these building blocks should be parameterized so that every team can dynamically call their appropriate inputs (such as secrets, resources, environment configurations parameters, etc…)

- Use declarative automation. Pipelines-as-code building blocks should be preferably declarative, so they can be more easily defined and extended, avoiding “spaghetti”, error-prone scripting or heavy lifting. Declarative pipelines are also more suitable for cloud-native environments such as Kubernetes.

- Enable modernization of legacy workflows. Since large organizations don’t start with all greenfield applications and have a lot of legacy scripts and technical debt, your DevOps platform should be flexible enough to enable you to grandfather legacy CI/CD technologies (such as old build tools) and even custom scripts (such as your Perl/Bash automation.) These need to be supported in your pipeline-as-code scripts as well, with standard steps or integrations that call external systems or custom code. This way, these legacy scripts can be triggered and orchestrated by a modern CI/CD solution, for end-to-end automation, until you have time to refactor or modernize them.

- Platform teams (more on that below) often use Pipelines-as-code as a way to scale DevOps adoption in the organization and align on certified processes, promotion gates, security and compliance checks, and more.

5 — Think global, act local, and a word on platform teams

System-level thinking is one of the key tenets of DevOps. It means thinking systematically and holistically about your entire DevOps ecosystem and your software delivery practices — encompassing your teams, culture, value streams, processes, and technology and architecture choices.

To scale DevOps in the enterprise, you need to take into consideration your organization-wide processes and tools, while allowing for flexibility, agility, and the particular needs of each team in order to continue to empower developers and enable autonomy. While you do not want to have a “wild west” and snowflake configurations/scripts for every single thing, you also don’t want to limit freedom of choice or flexibility when certain teams/apps require specific tooling or processes.

Your DevOps platform needs to enable you to “think global, act local.” In other words, consolidation doesn’t have to come at the expense of team autonomy, flexibility, or velocity. For example, you should be able to enforce different security policies, or have the ability to integrate specific best-of-breed point tools, for certain use cases.

This approach reduces drift and prevents you from ending up with snowflake workflows or configurations, while also ensuring compliance as well as flexibility to support any tools or process in your DevOps ecosystem — for both legacy and cloud-native app delivery.

This is where DevOps platform teams come in!

Application delivery is a critical capability and competitive advantage for organizations today. With scale and growth in deployment frequency, many enterprises realize that it is impractical to continue to manage delivery as ad-hoc/siloed different solutions for different teams.

DevOps platform teams (also known as Platform Ops or Delivery Services) are responsible for selecting the tools and delivery infrastructure to enable DevOps-as-a-Service to all internal teams — with shared tools, processes, and governance. Platform teams provide consistent, standardized processes for all teams to use in order to improve speed, productivity, reliability, and TCO. To that end, once they have selected their chosen tools, platform teams often:

- Leverage Pipelines-as-code as a way to scale and accelerate their DevOps adoption in the organization, while ensuring governance, compliance and auditability. As part of these efforts, they often design the processes and pipelines to be used across the company. They take the lead on developing, hardening and certifying the automation building blocks and provide them to the teams to consume from a central repo. They further enhance these processes as new requirements emerge — meaning that the organization can consume consistent processes that are also evolving and improving, in a scalable way — thus streamlining and accelerating overall delivery.

- Manage a catalog of self-service node pulls and secrets rotations to enable developers to provision environments with consistent configurations, in a secured way.

- Enable central access to a shared repository of “certified” packages, to be consumed by developers. The platform team is the one usually responsible for hardening and securing OSS dependencies, running them through their compliance tests, and “signing off” on them as vetted and compliant with the organization’s governance policies. Rather than having each team have to do their own securing and hardening of OSS dependencies, this pattern eliminates re-work and enables re-use and standardization around shared, secured, artifacts.

- Manage the sharing of binaries between teams/stages of the pipeline and software distribution to last-mile deployment in a centralized way — to optimize for throughout, network utilization, and also ensuring security and governance. For example, in organizations with separation of duties requirements, platform teams often manage the automatic certification and transfer of “production-ready” binaries to read-only edge repositories that are located close to production, where only authorized personnel has access to, and infrastructure nodes are mapped to only pull binaries for deployment sequences from these approved locations.

- Manage Access Controls (RBAC) in a centralized way to ensure compliance and auditability — with the ability to scope the level of access to all approved components including repositories, artifacts, tools, pipeline processes, approvals, environments, and more.

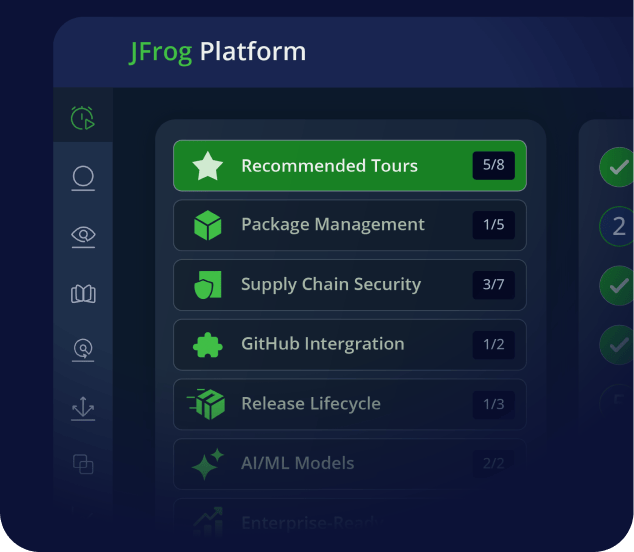

See it in action!

Watch the webinar “Developers Driving DevOps at Scale: 5 Keys to Success,” where Loreli Cadapan, Senior Director of Product Management at JFrog, offers a demo of JFrog’s unified, end-to-end enterprise DevOps platform, including its universal artifact repository, security and compliance scanning with JFrog Xray, CI/CD Pipeline automation, and software distribution features.