Under the Hood: Engineering JFrog Premium Availability

In the modern software factory, 99.9% uptime is no longer the gold standard. A standard 99.9% SLA translates to approximately 43 minutes of unexpected downtime per month. While industry data shows that a single minute of downtime costs an average of $9,000, for large global enterprises, that figure can easily be 5x higher. At tens of thousands of dollars per minute, those 43 minutes quickly compound into a catastrophic financial and operational risk.

We recently announced the General Availability of JFrog Premium Availability, providing a contractual 99.99% in-region uptime SLA. Stepping up from three nines to four nines is not achieved by simply updating a contract or throwing more hardware at a problem. It requires a fundamental evolution of the underlying architecture.

Here is a deeper look at the engineering, Kubernetes orchestration, and application-level hardening that makes our 99.99% SLA a reality.

1. Significantly Reduced “Noisy Neighbor” Effect with Premium Cells

Across the software industry, standard SaaS environments are typically built on highly dense shared infrastructure to maximize efficiency and scale. While this model is highly effective for everyday operations, heavy density inherently creates a “noisy neighbor” risk scenario where a massive, unexpected spike in activity from one tenant can inadvertently throttle or impact the performance of others relying on those same shared components.

To guarantee stability for mission-critical workloads, we created what we call ‘Premium Cells’ specifically to host these instances.

- Strict Density Limits: Instead of standard high-density packing, we strictly control and cap the number of instances in these environments to maintain a much lower tenancy per cluster. This architectural isolation significantly reduces the “noisy neighbor” risks commonly found in heavily populated shared environments.

- Guaranteed Resources: Every environment in a Premium Cell is backed by pre-allocated compute resources (i.e., CPU/RAM) and enterprise-grade High Availability (HA) databases, such as Cloud PostgreSQL.

2. Mastered Graceful Kubernetes Shutdowns

Cloud infrastructure is never static. Behind the scenes, services are constantly being upgraded, scaled out to meet demand, or scaled back in during quieter periods. In a standard environment, these routine operations can cause brief blips: a request that times out, a build that has to retry, or a download that fails mid-stream.

We re-engineered how JFrog handles these transitions so that your teams never notice them happening. When the platform needs to take a service instance offline, whether for a version upgrade, a scaling event, or routine maintenance, it follows a carefully orchestrated sequence:

- New traffic is seamlessly redirected. The moment an instance begins winding down, our routing layer automatically directs all new requests to other healthy instances. There is no window where a request can land on a server that’s about to go offline.

- In-progress work is always completed. Any operation already underway (e.g., an artifact upload, a security scan, an API call) is allowed to finish naturally. The instance stays alive until every active task has completed successfully.

- Shutdown only happens when it’s safe. The platform continuously monitors each instance during this transition. Only once all work is fully complete and all connections are cleanly closed does the instance actually shut down, and not a moment before.

The result: your CI/CD pipelines, security scans, and artifact operations continue uninterrupted, even as the platform evolves underneath them.

3. Application-Level Resilience for Artifacts and Security Scanning

A resilient infrastructure layer is essential, but it’s not sufficient on its own. The application itself needs to be hardened to handle extreme conditions gracefully. We made targeted improvements across three areas to ensure the platform stays stable even under the heaviest, most unpredictable workloads.

- Intelligent traffic prioritization: Not all operations carry the same urgency. A developer waiting on a build-blocking artifact download needs a faster response than a background indexing job. We introduced a priority-aware queue management system that automatically classifies and ranks incoming work. During traffic spikes, critical operations are served first, while lower-priority tasks are throttled gracefully rather than allowed to compete for the same resources and drag everything down.

- Memory-efficient processing at scale: Enterprise environments routinely push massive volumes of artifacts and package metadata through the platform. Without careful optimization, this can lead to memory pressure that degrades performance for everyone. We fundamentally reworked how the platform handles large datasets, shifting to streaming-based processing and smarter caching strategies that keep memory consumption flat and predictable, regardless of how much data is flowing through the system.

- Redundant security scanning infrastructure: Uptime doesn’t just mean your artifacts are available; it means your software supply chain stays secure without interruption. JFrog’s security capabilities, including Xray, Curation, and Catalog, depend on centralized intelligence services to evaluate threats in real time. We built automatic failover into these services so that if any node experiences a disruption, traffic is instantly rerouted to a healthy one. Your vulnerability scans, policy checks, and curation rules keep running without your pipelines ever knowing something happened behind the scenes.

4. Infrastructure That Scales With You, Not Against You

Guaranteeing 99.99% uptime isn’t just about surviving failures, it’s about absorbing sudden surges in demand without breaking a sweat. We made significant upgrades to how the platform scales and manages its core resources.

- Intelligent, demand-aware scaling: Traditional autoscaling reacts to broad metrics like CPU usage, which often means the platform is already under strain before new capacity kicks in. We enhanced our scaling engine to respond to real-time workload signals so additional capacity is provisioned precisely when it’s needed, not after performance has already started to degrade. The result is a platform that absorbs traffic spikes smoothly, keeping build and deployment times consistent even during peak usage.

- Optimized database connection management: In high-throughput environments, database connections can become a hidden bottleneck. We introduced an advanced connection pooling layer that efficiently multiplexes thousands of application requests across a managed pool of database connections. This prevents connection exhaustion during demand surges and ensures that database-dependent operations, from metadata lookups to security scans, remain fast and reliable under any load.

5. Took Proactive Observability Even Further

Even the most resilient architecture requires elite operational monitoring. To support a 99.99% SLA, we pushed the boundaries of our already proactive observability strategy to be even more hyper-vigilant:

- Proactive Thresholds: Premium Clusters utilize specifically tuned, lower monitoring thresholds. This allows us to catch latent issues early, minimizing our Time-to-Detect (TTD) well before standard alerts would trigger.

- Validated Rollouts: We protect these clusters using “last-in-line” deployment sequencing, ensuring Premium Availability customers only receive the most heavily validated and mature version rollouts.

- Prioritized Incident Handling: Incidents are escalated to the highest severity (Sev0/1) with heightened alert sensitivity.

By decoupling our architecture, introducing rigorous Kubernetes state management, and optimizing memory handling at the artifact level, we transformed JFrog’s SaaS uptime from “industry standard” reliability into a best-in-class, guaranteed 99.99% utility.

6. White-Glove Operations and Deployment Safety

Engineering a resilient platform is only half the equation. The other half is how that platform is operated day-to-day. Premium Availability customers receive a fundamentally different operational treatment across two critical areas.

- Prioritized incident response. When an issue is detected on a Premium Availability environment, it is automatically escalated to the highest severity tier within JFrog’s incident management process. This means faster mobilization of engineering resources, dedicated war-room coordination, and direct engagement from senior technical staff. Premium Availability incidents don’t wait in the queue, they move to the front of the line.

- Battle-tested releases only. Premium Availability environments follow a “last-in-line” deployment strategy, meaning they are always the final environments to receive any platform update. By the time a new version reaches a Premium Availability customer, it has already been running in production across JFrog’s broader fleet for an extended period, thoroughly validated under real-world conditions at scale. This approach eliminates the risk of early-stage rollout issues and ensures that your environment only ever runs the most stable, proven version of the platform.

JFrog customers can contact their account representative today to discuss upgrading to Premium Availability.

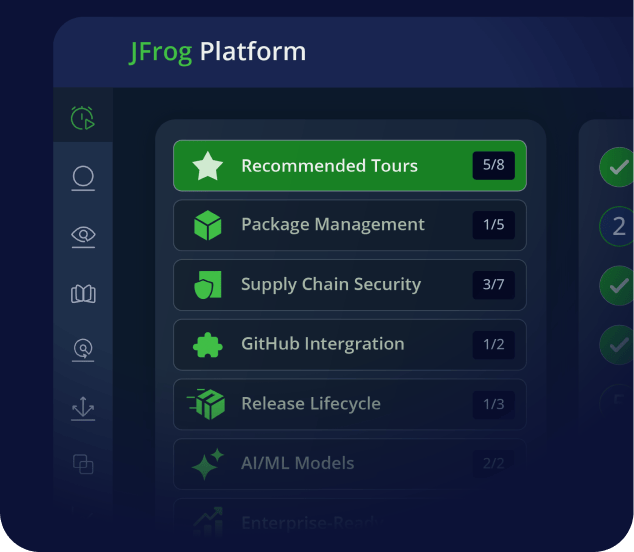

If you’re new to JFrog, we invite you to take an online tour, book a personalized demo, or start a free trial to see how your organization can achieve 99.99% uptime resilience today.