Trusted AI Adoption (Part 1): Consolidation

Imagine your lead Software Engineer walks into your office and says, “Good news! I just deployed that critical update to production. I wrote the code on my personal laptop, didn’t run it through CI/CD, skipped the security scan, and just copied the files directly to the server with a USB drive.”

You would fire them. Or you would revoke their access immediately. In modern software development, this scenario is unthinkable; you’ve spent the last decade building a fortress of automated pipelines, immutable binaries, rigorous scanning, and strict governance.

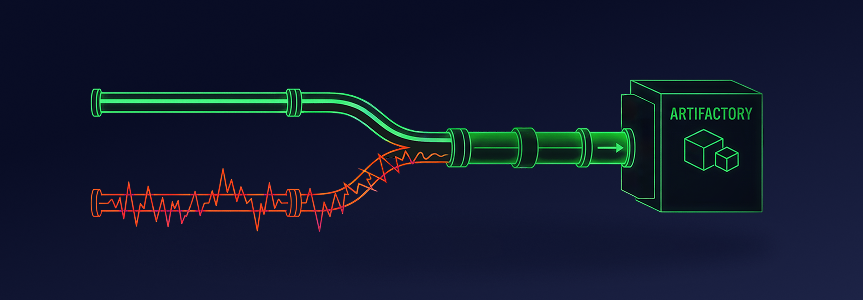

But here is the AI paradox: While your core software runs on a high-security superhighway, your AI-driven development is currently off-roading. Developers are pulling models directly from public hubs to local machines and spinning up Model Context Protocol (MCP) servers—the tools that give AI agents “hands” to access your data—without IT oversight. When an AI application is finally “ready,” it’s often shared via email, Slack, or manual cloud uploads with zero lineage or security context.

You haven’t just built a silo; you’ve accidentally created a shadow supply chain.

This blog is the first in a 5-part series based on our “From Chaos to Control” webinar and the accompanying Trusted AI 2026 Playbook, where we break down the five essential pillars of trusted AI adoption. Before we can address advanced security or governance, we must fix the foundation. It’s time to talk about Pillar 1: Consolidate.

The Tale of Two Lifecycles

Most enterprises today unknowingly operate in a dual-architecture. They have one lifecycle for code and a completely separate lifecycle for Artificial Intelligence.

- Track A: The Software Supply Chain (The Fortress). This is your mature DevOps and DevSecOps environment. It’s automated, visible, and governed. Your artifacts (e.g. Docker images, npm packages, JAR files) flow through CI/CD pipelines, automated testing, and security scanning, etc.

- The Result: You know exactly what is in production.

- Track B: The AI Supply Chain (The Wild West). This is your emerging AI environment. It’s manual, experimental, and disconnected. Here, the artifacts (e.g. heavy model weights, local MCP servers, Python scripts, .md files) live on local laptops or hidden within your software supply chain.

- The Result: “Black boxes” are constantly entering your production environment, and you cannot govern what you cannot see.

The strategic problem isn’t the use of different tools, but that Track B bypasses the safety checks of Track A while maintaining the same access to your enterprise systems.

Your engineers aren’t building Track B out of malice, but necessity; engineers are trading security for velocity to avoid the friction of current governance and secure a “first-mover advantage.”

Faced with this chaos, security leaders often instinctively try to block AI tools and public hubs. However, you cannot ban efficiency; blocking doesn’t stop “Track B,” it simply pushes it deeper into the shadows as engineers bypass controls to maintain productivity. The goal shouldn’t be to stop AI, but to merge both tracks onto a single, trusted path.

Trusted AI Pillar 1: Consolidate

So, how do you fix this? The answer is Consolidation.

Let’s be clear, this isn’t about reorganizing teams and tools.; it’s about recognizing that code and AI assets are part of the same application.

To achieve Trusted AI, you must bring all AI assets—proprietary models, public open-source models, Model Context Protocol (MCP) servers, and whatever comes next—into the same system of record as your software binaries. You need to move from parallel tracks to a consolidated software supply chain.

In a consolidated supply chain an AI model or an MCP server is treated exactly like a Docker image or Maven package. It is:

- Immutable: Once created, it cannot be secretly changed.

- Versioned: You can trace every iteration back to its source.

- Scanned: It passes through the same security policies as your code.

- Centralized: It lives in a single, governed repository that your developers can use.

What Does a “Consolidated Software Supply Chain” Look Like?

By consolidating your AI and traditional software supply chains, you eliminate the need for developers to “off-road.” Instead, you bring AI assets directly into their existing workflow, securing them under the same “Track A” policies and environments you already trust.

1. Proxy public hubs (stop the direct downloads)

Instead of forcing developers to download models directly from Hugging Face or MCP servers from GitHub, you route these requests through a centralized proxy—a remote repository.

By consolidating your supply chain, you provide developers with a faster, more reliable experience through local caching while simultaneously establishing a policy “gate” to inspect incoming assets before they enter your environment.

2. An AI registry for everything

The rise of Agentic AI has introduced MCP servers, or connectors that allow AI models to access your enterprise systems (e.g. to read your documents or access your database). An unvetted MCP server is potentially a rogue agent with keys to your data.

By consolidating all AI components, including MCP servers, into the same registry as your models and software, you ensure that no agent can run in your environment unless it has been vetted, approved, and pulled from the trusted source.

3. Always link the context

This is the “secret sauce” of consolidation. If an MCP server gives an AI agent “hands,” consolidation provides the “map”—the secure enterprise context required to do meaningful work. Without this connection, an AI agent is just a powerful tool operating in the dark.

By merging your environments, you can link every model and MCP server directly to the specific software application that consumes it. This creates full traceability: if a feature breaks or an AI agent goes rogue, you can instantly trace the issue back to the exact model version and binary that powers it.

The Payoff: One Source of Truth for the AI Era

When you consolidate Track A and Track B, the shadow supply chain disappears. By bringing AI assets into your existing governed lifecycle, “Governance” shifts from a roadblock into a competitive advantage. You gain a single source of truth where you can automatically block non-compliant licenses or malicious MCP servers at the gate, long before they reach production.

Safety doesn’t mean slowing down; you’re building a foundation that allows you to trust AI adoption, at scale.

Ready to Merge the Tracks?

Consolidation is the non-negotiable first step, but simply having an AI registry isn’t enough. Your developers may already be using AI in ways you can’t see—through direct API calls to external providers, unmanaged packages from Hugging Face, or unauthorized MCP connections.

This is Shadow AI: any unmanaged AI asset operating without oversight.

In our next blog post on Pillar 2: Detect, we’ll explore how to “turn on the lights” in your supply chain to reveal and control these hidden connections.

Don’t want to wait? Book a live demo to see how the JFrog Platform brings models, MCPs, and software into a single, governed supply chain today.