From Shai-Hulud to LiteLLM: Supply Chain Attackers Are Coming for Your Agents

The LiteLLM supply chain compromise of March 24, 2026, is not an isolated incident. It is the latest and perhaps most dangerous chapter in an evolving attacker playbook that JFrog Security Research has been tracking for years. The target has shifted from developers to the AI agents that developers now rely on to build software.

The Developer Has Always Been the Target

Supply chain attacks follow a simple formula: Find a trusted open-source package, poison it, and let the ecosystem do the distribution. Shai-Hulud, first reported in September 2025 and extensively analyzed by the JFrog Security Research team, was a good example. Based on a self-replicating worm embedded in npm packages, it was programmed to steal developer tokens and automatically re-infect other packages those developers maintained. Since it spread exponentially without any direct attacker involvement, it was considered one of the most severe JavaScript supply chain attacks on record.

To help organizations protect themselves against this type of threat, JFrog Curation provides an automated gatekeeping layer that blocks malicious and vulnerable open-source packages before they reach developer environments.

The SDLC Is Changing – and So Is the Attack Surface

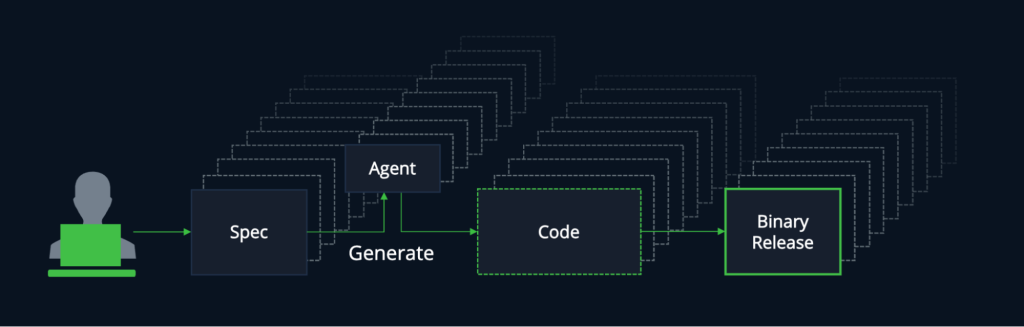

In addition to developers themselves, malicious actors are also finding vulnerabilities in the AI generated code. Software developers are no longer relying solely on their own coding skills, but rather . orchestrating multiple AI agents simultaneously for code generation, testing, research, and deployment. The development lifecycle has evolved from a linear, human-driven flow into a distributed, multi-agent pipeline.

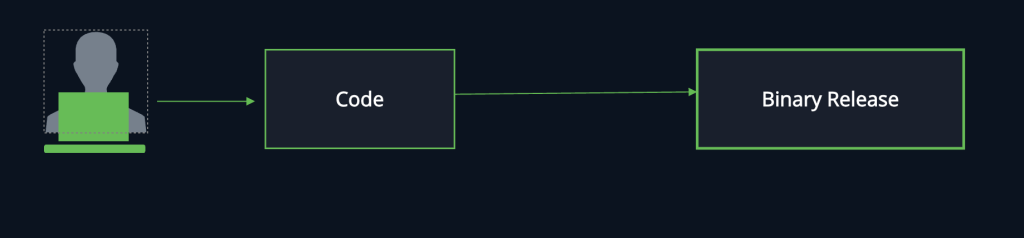

Before AI-generated code:

After AI-generated code:

Each developer can now run several agents in parallel, each interfacing with AI packages, MCP servers, models, and skills, resulting in a rapidly expanding ]attack surface.

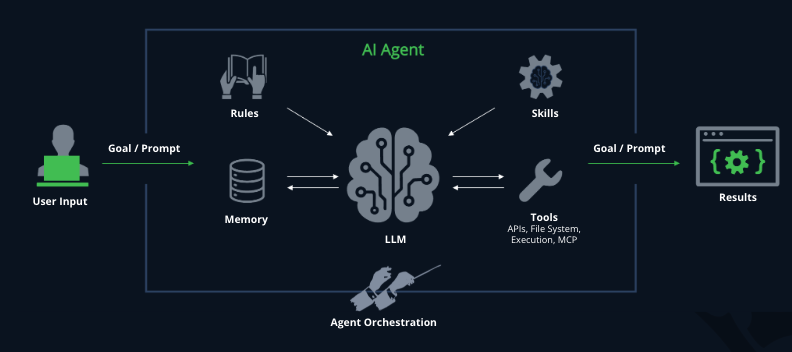

Understanding how an agent works is key to understanding this new attack surface. An AI agent isn’t a model, it’s a composition of AI assets:

- The LLM at its core

- The rules and memory that shape its behavior

- The agent skills it can execute

- The MCP servers that connect it to external systems and data

Each of these components represents a potential attack/risk vector.

Anatomy of an AI agent

Agents Are Now the Target

When supply chain controls made poisoning developer environments more challenging, attackers pivoted. If you can’t get past the developer, then why not go after their tools.

September 2025 postmark-mcp: The first malicious MCP server in the wild.

A threat actor cloned the legitimate Postmark MCP repository and published a near-identical npm package. For fifteen versions, it worked flawlessly and was trusted by developers across hundreds of workflows. Then version 1.0.16 introduced a single line of code, a hidden BCC, that silently forwarded every email sent through the MCP server to an attacker-controlled domain. Password resets, invoices, internal memos were all quietly exfiltrated from inside AI agent workflows that had extended full trust to the connector.

What made it particularly dangerous, is that MCP servers run with high trust and broad permissions inside agent toolchains. A rogue MCP server doesn’t just poison a single codebase, it becomes a persistent upstream control plane capable of manipulating an entire AI-driven workflow and all of its connected systems.

The postmark-mcp attack was a warning shot. The LiteLLM compromise was the escalation.

The LiteLLM Attack: A New Level of Sophistication

LiteLLM is one of the most critical packages in the AI ecosystem, acting as a universal gateway to over 100 LLM APIs including OpenAI, Anthropic, AWS Bedrock, and Google VertexAI. The package sees approximately 3.4 million downloads per day and is present in 36% of cloud environments. It is also a common transitive dependency in MCP plugins and AI agent frameworks, which is exactly why it was targeted. See JFrog Security Research’s full technical analysis of the attack for details.

The attack was carried out by TeamPCP, the same group behind recent compromises of Aqua Security’s Trivy scanner and Checkmarx’s KICS GitHub Action. This was not opportunistic — it was a coordinated, cascading campaign.

How It Happened

LiteLLM’s CI/CD pipeline ran Trivy as part of its build process, without a pinned version. On March 19, TeamPCP had already compromised Trivy’s GitHub Action. When LiteLLM’s build ran, the compromised Trivy action exfiltrated the project’s PyPI publish token from the GitHub Actions runner. Armed with that credential, the attackers published litellm versions 1.82.7 and 1.82.8 on the morning of March 24, each containing malicious payloads that bypassed LiteLLM’s normal release workflow entirely.

What the Malware Did

Once triggered, the payload ran a three-stage attack:

- Harvesting credentials such as SSH keys, AWS, GCP and Azure cloud tokens , Kubernetes secrets, crypto wallets, and

.envfiles. - Attempting lateral movement across Kubernetes clusters by deploying privileged pods to every node.

- Installing a persistent systemd backdoor that polls for additional binaries from attacker-controlled infrastructure. All collected data was encrypted and exfiltrated to

models.litellm.cloud— a domain impersonating legitimate LiteLLM infrastructure, registered the same day as the attack

Version 1.82.8 was especially aggressive, as it abused Python’s .pth file mechanism, which fires on every Python interpreter startup regardless of whether LiteLLM is explicitly imported. The payload was double base64-encoded to evade static analysis.

How It Was Discovered – Inside an Agent

The attack was first discovered when the package was pulled in as a transitive dependency by an MCP plugin running inside Cursor. A researcher’s machine became unresponsive from RAM exhaustion, a side effect of the malware’s own fork-bomb bug. The malicious package had reached an AI agent’s runtime before any human knowingly installed it.

The Organizational Impact

Because LiteLLM typically sits directly between applications and multiple AI service providers, it holds API keys, environment variables, and sensitive configuration data for an organization’s entire AI stack. A single compromised install exposes the credentials for every AI service that an organization uses, across every environment where LiteLLM is run: Developer machines, CI/CD pipelines, staging, and production alike. The malicious versions were live on PyPI for approximately three hours. Given the package’s download volume, the potential exposure in that window was significant.

The Pattern Is Clear

| Attack | Vector | Target |

|---|---|---|

| Shai-Hulud (npm) | Self-replicating worm in open-source packages | Developer environments |

| postmark-mcp (npm) | Malicious MCP server | AI agent workflows |

| LiteLLM (PyPI) | Poisoned AI infrastructure package via compromised CI tool | Agent runtimes + CI/CD |

The attack surface isn’t just wider, it’s been multiplied by the number of agents each developer runs. A single compromised AI dependency can cascade across every environment where agents operate simultaneously.

The Open-Source AI Gateway Problem

LiteLLM’s role in the ecosystem is worth examining closely. At its core, it is an open-source AI gateway using a single routing layer sitting between your applications and every LLM API you use. That architectural position is powerful. It is also precisely what makes it such an attractive and devastating attack vector.

When you route all your AI traffic through a single open-source package, you are placing extraordinary trust in that package’s integrity, its maintainers’ security practices, and the pipeline that publishes it. The LiteLLM compromise showed how fragile that trust can be. A single unpinned dependency in a CI workflow was all it took to hand attackers the keys to an organization’s entire AI infrastructure, including every model API key, every cloud credential and every secret in the environment.

Clearly, we are not suggesting not to leverage the power of AI gateways. We are, however, making a strong argument for building them with the proper secure foundations. An AI gateway that sits at the center of your agentic supply chain needs to be governed with enterprise-grade controls, not pulled from a public registry and hoping for the best.

Building a Trusted Agentic Supply Chain

The answer is not to slow or ban AI adoption. It is to stop trusting the AI layer blindly and start governing it with the same rigor applied to the rest of the software supply chain.

Precisely for this reason, we introduced JFrog AI Catalog and its AI Gateway that is built from the ground up around the principle that your agents are only as trustworthy as what they consume, build, and ship.

The AI Gateway: Trust by Design

Unlike an open-source gateway that routes traffic and hopes for the best, the JFrog AI Gateway is designed as a secure, policy-enforced connection layer. It routes all AI consumption models, MCP servers, agent skills through a single, centralized control point, bringing your entire AI footprint under unified governance. It also ensures that every request is authenticated and every downstream asset is thoroughly vetted before access is granted.

This is the architectural difference that matters: the JFrog AI Gateway doesn’t just proxy traffic – it enforces trust at the point of connection, before an agent ever touches an unverified model or MCP server.

The JFrog MCP Registry: No More Blind Trust

The JFrog MCP Registry, is a direct response to exactly the class of attack that postmark-mcp and LiteLLM represent. It treats MCP servers as fully governed artifacts in the same way JFrog has always managed and secured software packages, with scanning, policy enforcement, and versioning built in.

At its core, the MCP Registry delivers:

Native security by design

Proactively blocking malicious or non-compliant MCP servers before they are downloaded or executed by agents, rather than detecting them after compromise.

Centralized governance

developers access a registry of pre-approved MCP servers directly from their IDEs (Cursor, Claude Code, VS Code), without needing to pull from public registries.

Enterprise-grade policy enforcement

Replacing “blind trust” with granular, project-level permissions that control precisely to which MCP servers agents are authorized to connect.

A local MCP Gateway

A lightweight proxy that transparently handles authentication and permission checks on the developer’s machine, ensuring coding agents only connect to pre-vetted servers.

The postmark-mcp attack succeeded because a developer pulled an MCP server from a public registry and extended it full trust. The LiteLLM attack succeeded because a build pipeline trusted an unpinned open-source package implicitly. The JFrog MCP Registry is designed so neither of those situations can occur without alerting the developer as every MCP server is a governed artifact, and those that are unapproved, are blocked at the gate.

JFrog MCP Registry is designed so that attacks similar to postmark-mcp or LiteLLM cannot occur without alerting the development and operations teams as every MCP server is a governed artifact, and those that are unapproved, are blocked at the gate.

A Single System of Record for the Entire Agentic Supply Chain

The broader vision is a unified system of record that governs every layer of what agents consume and produce: Models, MCP servers, agent skills, AI-generated code, and assembled artifacts, all managed in one place, under the same security and compliance controls as the greater software supply chain.

This is what JFrog calls a Trusted Agentic Supply Chain. It extends the same principles that have governed software supply chains for decades, such as immutability, provenance, policy enforcement and continuous scanning to every new AI asset class that agents depend on. It is validated by integrations with partners like NVIDIA, whose OpenShell runtime uses the JFrog Platform as its governed endpoint for distributing verified AI skills at enterprise scale.

How JFrog Helps Protect your Agentic Supply Chain

By partnering with JFrog you can start protecting your organization from the latest supply chain attacks by taking the following steps:

- Audit your AI dependency chains including transitive dependencies pulled by agents and MCP plugins

- Make sure to pin versions as the LiteLLM attack exploited unpinned dependencies at multiple points in the chain

- Stop treating open-source AI gateways as inherently trusted infrastructure – route AI traffic through governed, enterprise-grade gateways with policy enforcement.

- Govern your MCP servers – use the JFrog MCP Registry to ensure agents can only connect to vetted, approved servers

- Rotate credentials aggressively after any suspected compromise by assuming full system compromise, not just package removal

- Build a trusted AI catalog – use JFrog AI Catalog as your single system of record for models, MCPs, skills, and AI packages

Take Aways

The SDLC has changed. Developers are no longer the only actors in the pipeline, they are orchestrators of agent fleets, each consuming AI assets at machine speed. Attackers are following them into every new environment they inhabit, including their agentic workflows.

LiteLLM was a wake-up call. It showed that the open-source AI gateway sitting at the center of your AI infrastructure is not neutral infrastructure, but rather a high-value target. The question is whether your trust model is ready for that reality.

At JFrog, we believe trust must be built into the platform and not assumed or bolted on after the fact. That means curating open-source packages, governing AI models, securing MCP servers through a registry that enforces policy before execution, and routing all AI traffic through a gateway that puts trust first.

Because in an AI-accelerated world, speed is only an advantage if what you’re building on can be trusted.

Book a Demo with one of our experts to witness a live demo of JFrog solutions. You can also learn more about JFrog AI Catalog, the JFrog MCP Registry, and JFrog Curation.