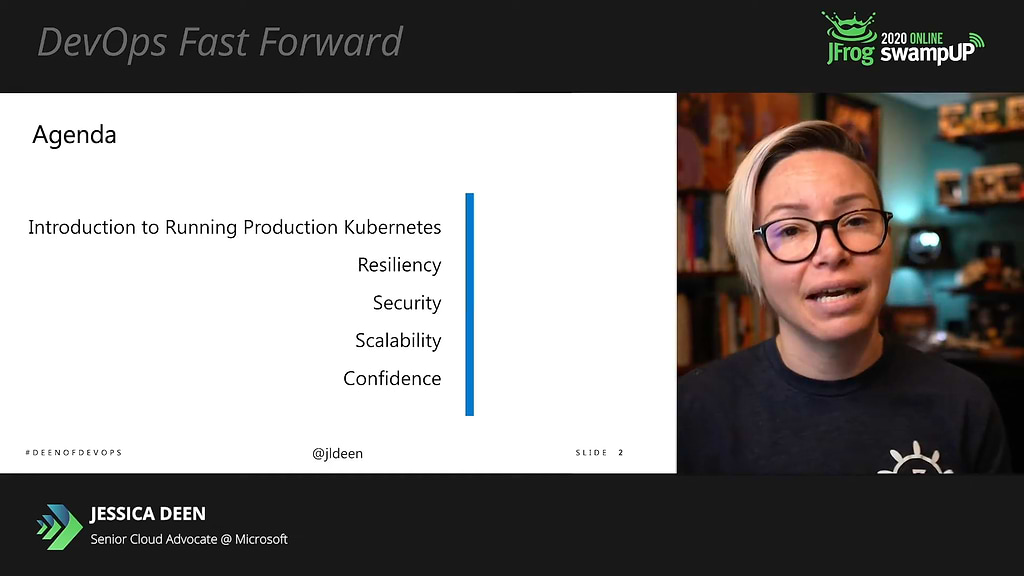

Kubernetes in Production with Jessica Deen at swampUP 2020

Jessica Deen’s swampUP 2020 talk takes Kubernetes to the next level. If you want to understand how to make your K8s implementation enterprise grade, then this is the session for you. JFrog was proud to announce Jessica Deen, Microsoft Azure Avenger, as the winner of the inaugural swampUP Carl Quinn Speaker Award. Jessica’s talk was full of demos, practical advice, and covered a wide range of real-world situations.

Jessica, Sr. Cloud Advocate at Microsoft, goes through the type of real world considerations that can keep you up at night, related to architecture, resilience, security, and scalability. She then caps the conversation with how to build confidence in your implementation. Jessica mentions and demonstrates various tools and technologies, but she’s careful to point out that these are just her examples and there are various choices that are available.

Here’s an overview of what she covered:

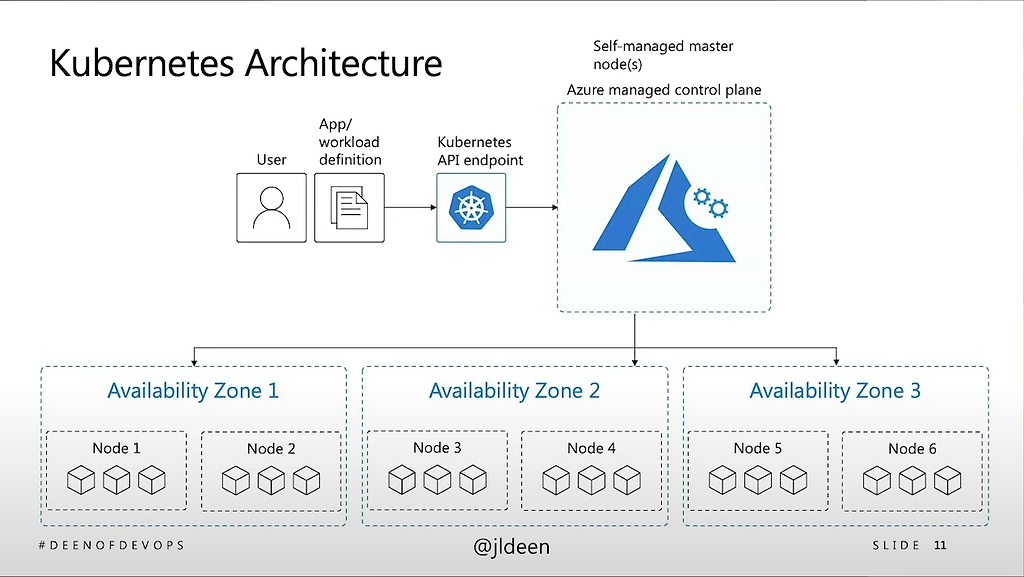

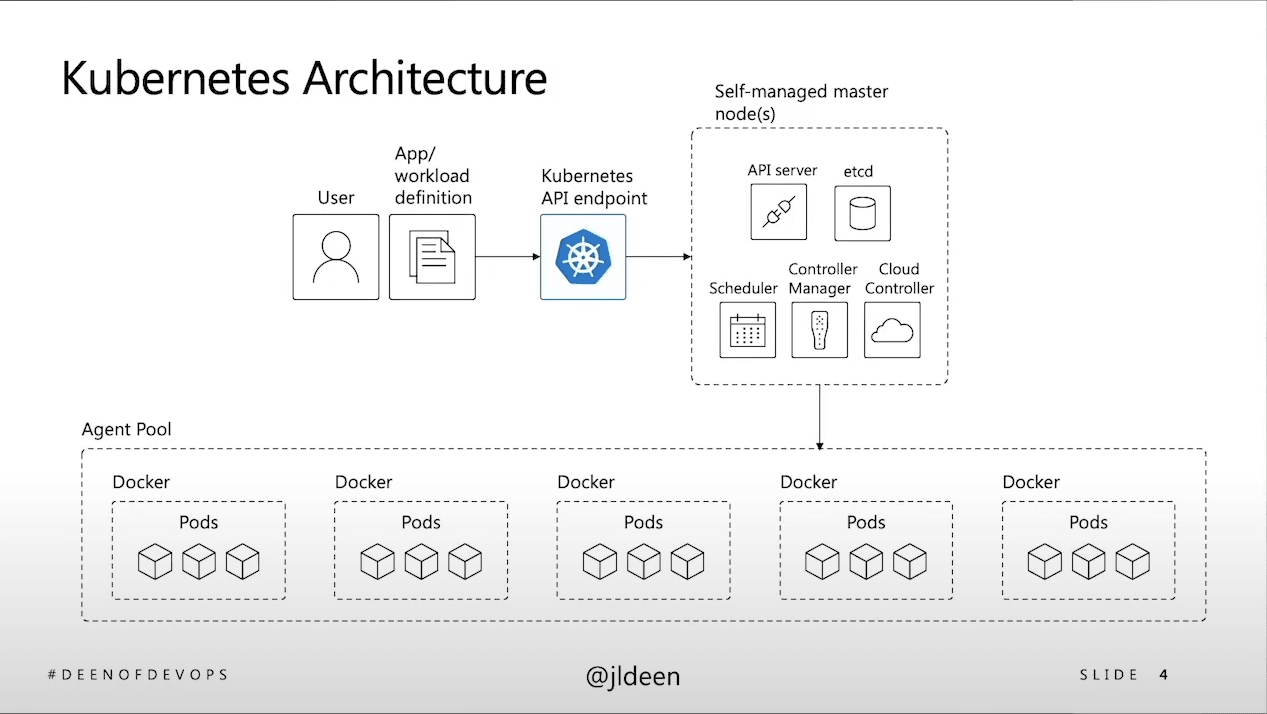

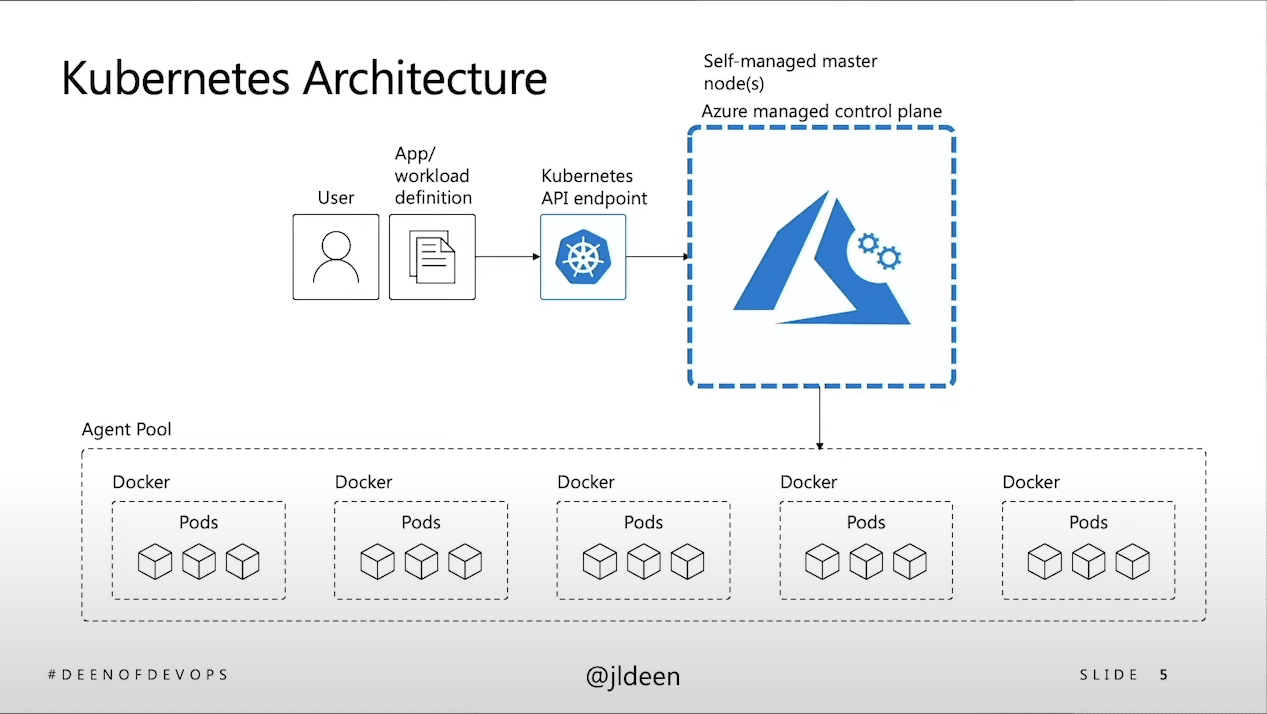

Kubernetes Architecture

The first of many key decisions has to do with whether to manage your K8s control plane on premise vs. handing this off to a public cloud provider. The answer requires an understanding of aspects of the K8s architecture that Jessica covers. She also covers the differences between basic level vs. production level K8s. Basic level is good for PoCs, but it’s not something that you can take into production for a long-standing application. While deploying a local Kubernetes environment can be a simple procedure that’s completed within days, an enterprise-grade deployment is quite another challenge. Jessica describes the additional parameters you need to consider for a production deployment. If you don’t want surprises, you need to understand these differences.

Resilience: handling application failure

How can you handle failure when it comes to production level Kubernetes? This has a lot to do with putting your pods and containers in different availability zones, i.e. different regions or different data centers. Jessica covers the things to consider and how to spread your deployments and replication sets across zones. In the context of CI/CD and Infrastructure-as-Code, Jessica discusses what the deployment YAML files or Helm charts look like for deploying to different availability zones.

Security: network policy and abstraction

Jessica focuses on how to handle network security, specifically network policy. This is an important consideration for modern applications with microservices spread across a network. Jessica describes how Calico, an open source network security project makes it easy to apply a network policy. With Calico you can specify network policy declaratively in a YAML file or manifest.

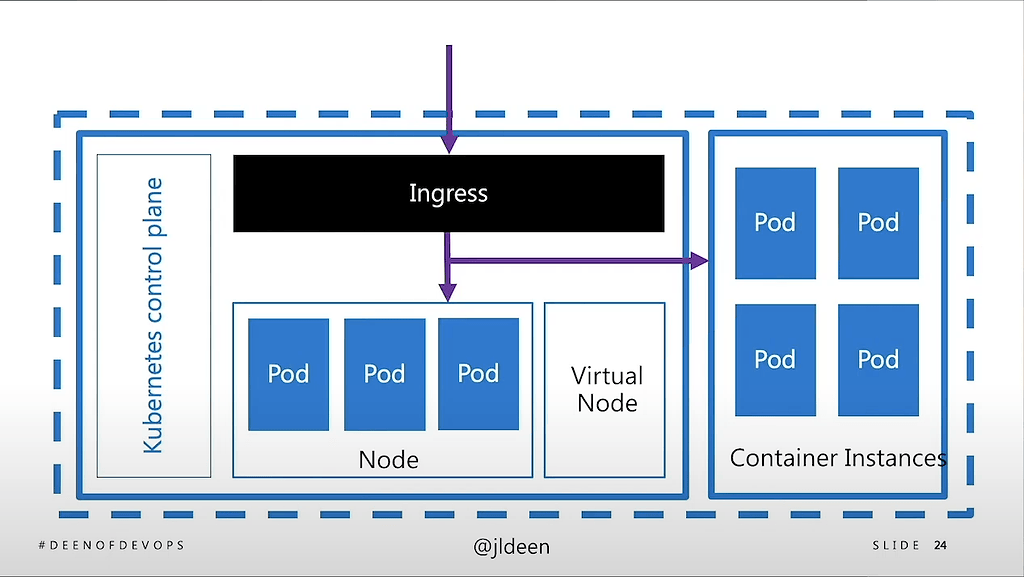

Scaling: add in serverless when seconds matter

Often, seconds matter when you’re scaling your application. You can easily lose tens or hundreds of thousands of transactions even if it takes just a few minutes to spin up nodes. So it’s important to understand the different way in which you can scale K8s in production. Jessica talks about different styles of scaling K8s and when to use each approach. These approaches include adding or removing nodes or using virtual nodes (project called Virtual Kubelet) and how to handle networking with virtual nodes. Jessica describes an approach to scaling that includes virtual nodes, RabbitMQ as a message broker running on virtual nodes, and a K8s autoscaling technology called Keda.

Gaining DevOps Confidence

Confidence results from including everyone possible in the review process so that you can confidently approve changes. You need to build confidence in the infrastructure, application security, the health and integrity of your binaries, and confidence in the debugging and pull requests and changes as you move from sprint to spring. Jessica demonstrates how to use GitHub actions to trigger workflows that verify pull requests and even trigger Slack notifications to add ChatOps to the process. When it comes to binary artifacts, Jessica likes JFrog because she can host her packages, Helm charts, and Docker images. She also likes being able to use JFrog Xray to run security scans in one location. Jessica also encourages the use of Infrastructure-as-Code as code as a way of building confidence.