It’s a Wonderful DevOps Life

In Frank Capra’s 1947 film, “It’s a Wonderful Life,” George Bailey wishes in the depths of his despair that he had never been born. So an angel shows him what that world would have been like.

I sometimes feel the need of an angel, too.

Not for the same reasons, of course. Our enterprise customers love Artifactory for the way it helps their DevOps container pipeline run smoothly from code to cluster. Yet I often find in the container ecosystem a misconception that, because Kubernetes orchestrates Docker images, Artifactory’s chief worth is seen as its ability to serve as a Docker registry. That’s very important, but it provides so much more.

Artifactory brings developers freedom in their choice of languages and frameworks, and confidence in their builds. It offers operations a sound strategy for how to integrate a variety of package managers and frameworks into the enterprise DevOps process.

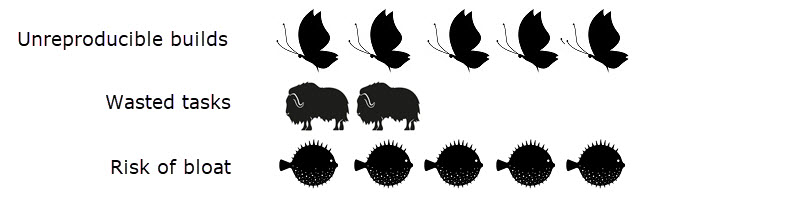

You likely have some essential concerns when choosing your DevOps setup:

- Can developers create deterministic, reliable builds?

- How many yaks do I have to shave (one-off tasks that don’t add value)?

- What is sneaking into my builds (bloat and/or risks) from dependencies?

Fortunately, Artifactory addresses all of these:

- Caches and archives all dependencies from public repositories into local ones for fast, reproducible builds. These include OS, language, framework, container, and configuration artifacts.

- Integrates with most package managers for easy setup and maintenance. Integrates with most popular CI servers for automation with minimal oversight.

- Provides visibility into your Docker images, so you know exactly what’s in them at any time.

Touring Pottersville

Artifactory makes these things so natural, that perhaps it’s easy to take them for granted. Perhaps the best way to understand these benefits is to consider how you would have to accomplish the same things without Artifactory.

What would your DevOps pipeline for containers look like if Artifactory had never been born?

Option 1: Always Build from Remote Repositories

Without Artifactory’s local cache of your remote dependencies, you might choose to build them directly from the remote resource every time you build your application.

In this scenario, you would always incorporate the latest version of the dependency into your build. But you would never have full control over what is in your build, no certainty that the version you are including is safe or compatible with the rest of your application. Since the code in the remote source can and will change frequently and at any time, you would be unlikely to ever be able to reproduce the same image from the same build procedure.

For the same reason, you would have no idea what other code might be getting introduced in your build — whether it is needed or secure. If you build everything directly from the remote resource, you’re builds are more likely to break.

Relying on a remote public repository for your build also carries the risk of slowing your CI process down whenever traffic loads are heavy on the network. Worse, a failure of the remote public repository at the very moment you need to produce a critical patch could be catastrophic to the business.

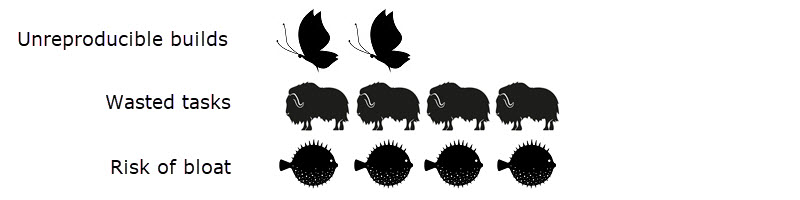

Option 2: Build from existing Docker images

Without Artifactory’s repository management features, you might select a Docker image already in Docker Hub that matches the language framework version and operating system distribution you need, and include that in your final image build.

This scenario would help you to create reproducible builds, but only as well as you version your docker images. You would need to take responsibility for what you let into production with little help from your tools.

Without Artifactory, you would most likely have little or no visibility into what the docker image you select actually contains. Figuring that out could be a cumbersome process.

The image might contain components that are malicious or just unused. The Docker build phase itself presents opportunities for breakage, delays, and unintended bloat that can slow your release process. The final image you produce might not conform to your operations policy.

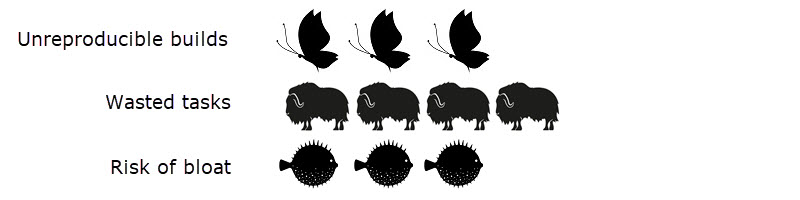

Option 3: Construct a base image locally

Without Artifactory’s repository management features, a developer might construct a base image on their local workstation and push it to the Docker registry. Then, the CI process can combine that base image with their application to build the final container image.

This approach, to create a maintained “golden base image,” is a common practice for creating deployable application containers that, in the short term, can be readily reproduced. In this scenario, the developer’s workstation (where the golden base image was built) effectively acts as the local cache for your remote dependencies.

This procedure requires careful construction and the ability to perform the base image build independently of the CI procedure. It works best if you don’t need to change base images often and you are satisfied being pinned to a given stack version. It also expects developers to devote their time to building images, rather than coding.

In practice, this seemingly simple solution can produce a sequence of problems that break your build automation, and force you to step in to resolve them. By their nature, these release blocks will most likely need your intervention at the most inopportune time — like adding a critical fix.

For example, you might need a new base image to roll forward some OS packages and take a new framework patch. All automated CI runs must be halted while you create a new base image with the new pieces and upload it to Docker. Since the changes in Docker and your source code are registered in different change logs, you must test the new changes against the new Docker base image. If you had to rebuild, even with the same source code, you will get different results, foregoing a deterministic build.

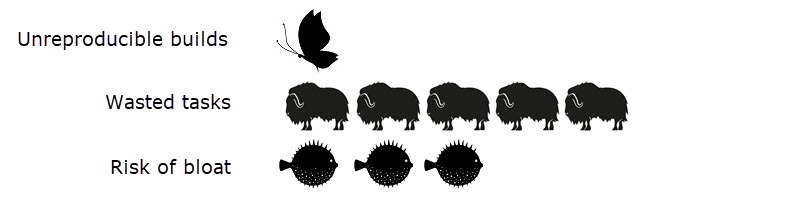

Option 4: Host individual local artifact repositories

Without Artifactory’s local cache of your remote dependencies (such as npm, maven, or PHP composer packages), you might choose to create and manage your own on a separate server.

If you chose to do this, you would gain a key benefit that Artifactory offers, of always building with a known version of your dependencies. You would be able to execute two builds in succession and produce an identical Docker image.

This solution, however, is only as flexible as you are clever and attentive. You become fully responsible for that infrastructure, when and how your local cache is updated, and whether or not you are able to roll back those updates if you need to, and keeping the archive pruned.

It’s also less likely that your implementation will be enterprise grade, able to work reliably with tools from multiple vendors at high availability. Each new technology you adopt requires additional effort, resources, and management, or even a completely new system to accommodate it. You’ve adopted a herd of very hairy, very needy yaks.

You also would be unlikely to have the cross-system traceability of your builds that grant you awareness of how your Docker images were built. If unwanted things sneak into your builds despite your best efforts, you would never know. Your risk of bloat, while lower, is still very present.

Back to Bedford Falls

“Strange, isn’t it?” the angel Clarence tells George in the film. “Each man’s life touches so many other lives. When he isn’t around he leaves an awful hole, doesn’t he?”

That’s a fair description of Artifactory in the DevOps process, too. The examples above show how many interdependencies there are in an automated CI process that Artifactory helps to resolve.

Artifactory helps you to treat Docker images as ephemeral packaging while you work to get your applications ready for production. Once they’re ready to deploy, Artifactory helps your automated CI process minimize the number of times Docker images need to be rebuilt, to ensure their stability.

Did a bell of recognition just sound in your head? Then perhaps an angel just got its wings.

To learn more about how Artifactory makes your DevOps life wonderful, read about 10 Reasons to use a Binary Repository Manager.