Best practices for scaling DevOps across sites

Scaling up your development organization typically involves spreading development over multiple locations around the globe. However, with distributed development comes its own set of issues, including ensuring reliable access to required software packages and artifacts for teams collaborating across time zones. In this live JFrog webinar, join JFrog Solutions Engineer Rakesh Krishna as they cover how to easily scale development through the use of multiple JFrog deployments.

We’ll cover: How to avoid common multisite pitfalls including latency and version issues Traditional binary replication and use cases How to use federated repositories and the benefit of this approach.

Register here to see this resource

Video Transcript

Sean Pratt:

Hello. Good morning, good evening, good afternoon to those joining us from around the world. My name is Sean Pratt, your friendly marketing manager here at JFrog. Before we get started with our presentation today, I want to go over a few housekeeping items. This session will be recorded and made available for you, typically within one or two business days after we wrap up here today. If you have questions, please drop them into the chat functionality. It should be on the bottom or left hand side of your screen. We’ll have people here to answer those questions for you, and Rakesh will be taking a few of those live at the end of this session. Just a reminder, the webinar that you’re here for today is on scaling DevOps across different sites, the challenges that poses, and how to tackle them. And joining us today to present is Rakesh Krishna, who’s a seasoned DevOps professional and a solution engineer here at JFrog. So without any further ado, I’m going to go ahead and hand it over to Rakesh so he can get us started. Rakesh, take it away.

RAKESH KRISHNA:

Thanks a lot, Sean, for the lovely introduction and welcome everybody. Good morning, good evening, based on wherever you are. And today’s topic for this webinar is Best Practices for Scaling DevOps Across Sites. And just like Sean mentioned, my name is Rakesh, part of the solutions engineering group here at JFrog. So let’s get started. So a quick bit about JFrog as a company itself. So JFrog offers a DevOps platform, which is really end-to-end. End-to-end because we can help you address a whole bunch of requirements or use cases right from the time when your artifacts are built, all the way to the deployment side of things when your artifacts actually get deployed. Along with that, we also have a focus on continuous security because we believe in the fact that security should not be an afterthought, but should definitely be part of your regular CI/CD workflow in an automated fashion. And so we do enable that.

Also, the entirety of JFrog platform scales to infinity, meaning there’s no restrictions or there’s no limitation as to the number of repositories, the number of users, any limitation on the amount of data that you can store. We can keep on storing and it scales really well. That’s exactly why a lot of our enterprise customers love and use JFrog. We also have hybrid and multi-cloud presence, meaning which you can post any of our JFrog products either in your own data center or on your own cloud provider regions, for example. Or you can basically have the SaaS offering that we have on any of the popular three cloud providers as well. And that is exactly how we support both hybrid and multi-cloud. We are universal, meaning we support more than 30 different package types. So all the popular package types that are currently under usage are very much supported out of the box by JFrog.

And we also integrate with basically any particular CI tool, any popular CI tools that are out there in the market, which is why we can universally in with any CI tool that you could have in your regular CI/CD Workflow. So here’s a quick look at the DevOps platform that JFrog offers. As you can see, there’s a whole bunch of products that we have as part of our platform. So let’s go one by one. So let’s start with Artifactory, which is the cornerstone of the entire JFrog platform. Artifactory, as you might probably know, is the product that you can use to store and manage all your artifacts. When I say all your artifacts, I mean both the ones that you develop internally inside your company as well as the third party dependencies that you typically bring in from outside, which are pulled during dependency resolutions while the builds are going on.

So both of these types of artifacts can very well be stored in your Artifactory instance. One second. Yeah, so along with this, we also support more than 30 different package types, so all the popular package types like npm, Docker, Java, Python, Newgate, so on and so forth, are very much supported out of the box by Artifactory. So irrespective of what different package types your teams are using or your developers are using inside your company, you can have all of them use Artifactory for all their artifact management needs. The next product that we have is Xray, which is our security offering, which is what can help you bring in security in an automated fashion by basically enabling some security and license compliant practices. And Xray really works hand in hand or complementary with Artifactory because Artifactory is the product that you can use to store your artifacts along with which you can also use Xray to make sure whatever you’re storing here are also satisfying all the security and the licensing benchmarks that you could have in your company.

And all of these are done in an automated fashion without the necessity of any manual intervention whatsoever. So that is a quick bit about Xray. Next up is JFrog Distribution. As the name says, Distribution is all about distributing the artifacts that you already have in your Artifactory instance so that they are close to wherever your runtime environments are or your production workloads could be. So it doesn’t matter where your runtime environments are, it could be in your own data centers or on your public clouds or even on your IoT devices, so Distribution is the product that can help you in getting your artifacts be very, very close to wherever these destinations are so that you can have your deployment tools, download the appropriate artifacts from Artifactory, and then go about deploying them on their destinations. Then we have JFrog Connect, which is actually a part of an acquisition that we just did last year.

This is really the project we can help you in configuring over-the-air or OTA updates for your IoT devices. So this basically helps you in the management of all your IoT devices, configuration of OTA updates, as well as monitoring of all these different IoT devices. Next up we have Pipelines, which is our CI/CD orchestration tool, which helps you orchestrate all the set of tasks right from your version control system all the way to the deployment side of things. Last but not the least, we also have Mission Control, which gives you a single pane of glass view of all your different deployments that you could have across multiple locations and Insight is the one that gives you some metrics based on which you can gather some insights across all the different deployments you could have of JFrog platform. So this was a quick bit or a quick overview of the whole platform by JFrog.

Now let’s switch our focus to the main product that is Jfrog Artifactory in itself because that is what the rest of the webinar is all about. So here is a whole bunch of benefits that Artifactory offers. So like I said, it’s basically a universal binary repository manager, meaning it offers repositories for your binaries or for your artifacts so that you can go on to store all these binaries in one single location. And it is also universal in nature meaning it supports close to 30 different package types. So like I mentioned earlier on, all the popular package types like npm, Docker, Java, Python, Go, Ruby, all of them are very well supported along with which it also offers you to store not only your locally developed artifacts, but also the remote dependencies that are typically available in the public registries. Those things can be cached on your private Artifactory instance, and that is exactly how the universality approach comes into play.

And this is exactly why and how Artifactory can help you in bringing in some centralization and standardization and that is exactly how it can serve as a single source of truth or a system of record for all the different development activities that could be going on across different teams inside your company. And due to this exact nature that it supports 30 different package types, all the package specific native comments like npm specific comments or Docker specific comments can very well be used by using your npm clients or Docker clients when you interact with Artifactory. And that is exactly how you can bring in some automation for your builds and build automation necessarily means you’re making your builds faster or quicker, okay? So that’s the automatic benefit that automation gives you.

And like I said early on, it doesn’t matter what CI tool you could be using, it could be Jenkins, GitHub Actions, GitLab CI, or whatever, we can definitely integrate with any of those CI tools with Artifactory so that you can automate your builds so that your builds can go on in an automated fashion by pulling dependencies. And once the builds are done, all the outputs of all these builds can be pushed back to Artifactory. And that brings us to the next benefit, which is automation will definitely make your builds faster and Artifactory also will make your builds be more reliable because of the fact that you can cache all the necessary dependencies in your private Artifactory instance. So even if the public registry is down or remains inaccessible for whatever reason, your artifacts or your dependencies are still very much available so that your builds can go on without any issues whatsoever. It also offers support for various types of metadata.

Artifactory automatically generates some metadata as soon as you upload something new like times stamps, the users who uploaded, so on and so forth, along with which the users can also provide their own custom metadata, which we call as user provided metadata. And all these metadata will come in very handy for use cases like searching, sorting, filtering, so on and so forth, which will enable you to do any of your custom workflows downstream, for example. And the next benefit is again, like I said, you can use Artifactory along with Xray to also ensure security and license compliance controls by enabling or by defining some policy so that all these things happen automatically as soon as something gets published or something gets cached in your Artifactory instance. And last but not the least, Artifactory also supports the multi-site needs as well as the disaster recovery needs, which are actually the topics of today’s webinar so we’ll get into this as we move on.

So this is a quick list of all the different benefits that Artifactory offers. Now having said this, we do see users who use some generic storage solutions like S3 buckets or shared network drives, or even Google drives for that matter, for the artifact storage purposes. Now this is fine, but it’s just that these things don’t offer the benefits that Artifactory offers because like I said, these are generic storage solutions which are not specifically made for the needs of artifacts themselves because of which you basically will lose out on bringing in automation for your builds because of the fact that these generic storage solutions don’t speak the native languages of any of these package types. All the packet specific network commands are not valid to be used with any of these generic storage solutions because of which you cannot bring in any build automation.

So when there is no automation, obviously you are making your builds a lot slower and there’s also a lack of reliability as well because these storage solutions do not have the concept of caching all the required dependencies, which Artifactory does. And that is exactly why whenever your builds are run, those builds will basically go to the upstream repositories like public registries for example. Then that is where the artifacts are downloaded from to resolve the dependencies. And if that is down or remains inaccessible for whatever reason, your builds will basically fail or will take a lot longer to finish. That is exactly the benefit that Artifactory offers because of the fact that it can support caching of all the required dependencies upfront so that your builds don’t have to reach out to the public registries every single time the builds are run. So these were a couple of benefits that Artifactory offers.

Now having said that, let’s take a look at today’s landscape. That is, first of all, everyone is distributed increasingly. When I say everyone I’m talking about the teams, team members, developers, managers, so on and so forth. All of them are distributed. And when I say distributed, I mean geographically distributed across different locations. And this could be for a couple of reasons. First of all, some companies do have offices across multiple locations. They have teams across multiple locations. Team members are increasingly remote nowadays because of the fact that people moved remote, especially during COVID starting in 2020. So that phenomenon still exists because of the fact that all the team members are increasingly distributed. And along with that, there’s also the other side of things that is the runtime environments, the production workloads, servers, all these things are again very much distributed as well.

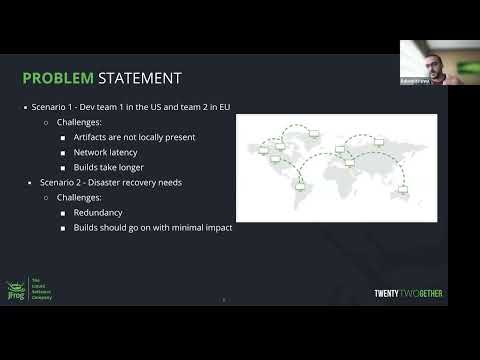

This is mainly because of the fact that companies nowadays are serving user base or customer base who are increasingly global and because of the business needs, they basically would have to have deployments going on across multiple locations, across multiple regions on cloud providers. And that is exactly how you have the production workloads, the servers, the hardwares across multiple locations as well. So that was a quick bit about today’s landscape. Now, given the fact that we have all these things and all these team members being distributed, this also brings us to two different scenarios which defines the problem statement of today’s webinar. So let’s take each of these scenarios one by one. Let’s take a scenario in which there’s the company which has development teams across multiple locations.

Let’s say there’s Team 1 in the US and Team 2 in the European region. And let’s also say that there is cross team dependency going on between these two development teams, meaning which let’s say whatever is being developed by Team 1 is needed by Team 2 and vice versa, which also means that whenever Team 2 needs something that is built by Team 1, which is in the US, since these artifacts that are developed in the US are available just in the US and are not available locally in the EU region where Team 2 is, they basically would have to reach out to the registry which is being used by the team in the US, which means they have to go across the continent, across the Atlantic Ocean and basically download all these necessary artifacts over the network, which introduces some challenges in itself.

First of all, this can impact the performance because of the potential network latency issues that could arise because of the distances between the locations because of which builds can take longer to finish basically, or if the network actually is down, or if it’s not reliable as much, basically builds will fail because of the fact that there’s no network access for downwards to happen. This is scenario one. Scenario two is disaster recovery needs. So disaster recovery by definition means there needs to be some kind of redundancy for the data that is being managed. In this case, since we are talking about artifact storage and artifact management, disaster recovery for artifact management means that we basically need to have redundancy for all our artifacts that are being stored as well as the metadata for all these artifacts. So this redundancy as well as we’ll bring in some business readiness whenever the main instance goes down for whatever reason, so that the switch or the failover can happen with very minimal impact.

So these are the two different scenarios that I present to you. So early on, we just saw that teams and the production workloads are increasingly distributed across multiple locations. Also, we just took a look at two different scenarios in which there were two different teams across different locations which have cross team dependencies as well as there was a disaster recovery unit as well. So given these two scenarios, how do you keep all these DevOps practices or workflows or delivery in sync with each other? How do you make sure that the DevOps delivery is always in sync, is the question that you obviously get in your minds. And that is where I would like to introduce you to the concept of replication that we have that Artifactory does support. Replication, as you might know, by definition means replicating or copying artifacts or whatever data it is from one location to another.

Since we are talking about artifacts specifically, here, in this case, replication means replicating the artifacts from instance one, which is in location one to another instance which is in a different location. Now let’s take an example of a company which has two different teams in two different locations. So how JFrog can support such company is by asking each of these teams which are in different locations to have an Artifactory instance of its own in each of those locations, like instance one in location one and instance two in location two so that Team 1 in location one can interact with instance one. Similarly, Team 2 in location two can interact with instance two and the replication functionality that Artifactory offers will take care of replicating all the necessary artifacts over from one location to another. And now as you can see on the slide, there are two different types of replication that Artifactory supports. By the way, these two types have always been there.

We call these the traditional types of replication, and these two types are push and pull. So let’s go on by one. Push replication, as the name says, is something that the source does, that is the source push replicates the artifacts from the source to the target. So in this type of replication, the replication needs to be configured at the source. And once the config is done, whenever there is a trigger, trigger meaning whenever there is a schedule that has been set up by the users upfront or whenever there is an event which triggers the replication, an event here could mean a simple upload of a new artifact, for example.

So whenever the trigger happens, the source basically push replicates both the metadata as well as the actual artifact itself from the source to the target so that once the artifact replication is done to the target, the team which is in location number two, can interact with the target instance and get all the necessary artifacts right from that instance instead of going all the way across multiple locations or going all the way across to a distance location to access the other instance, which could again introduce some potential latency issues. Similarly, there is another type called pull type. The end goal of this replication is also exactly similar to the push types’ end goal, which is to replicate artifacts from one location to another. It’s just different in the way how this works.

So in the pull type, replication configuration is done on the target site. So whenever there is a trigger on the source, trigger, once again, meaning a schedule that has been set up by the users or an activity that happens like a new artifact upload operation, for example, so whenever this trigger happens on the source, the source basically informs the target about the new event and then the target will basically reach back to the source and pull all the new updates, which includes artifact as well as the metadata from the source. And that is how artifact replication will make sure that the new artifact is also available on the target.

Then once this is done, obviously like I said early on, Team 2, which is in location two, can very well interact with the target instance so that they don’t have to go all the way across continents, for example, to get access to a particular artifact which was developed by a different team in a distant location. So that is exactly what application is all about, and these are the traditional types that we’ve always had from many, many years. Now having said that, we now have a brand new capability, which is called federated repository, which is another type of repository basically. Now, this Repository Federation concept was introduced very recently, sometime last year I suppose, and this makes things much more easier and convenient, especially when it comes to bidirectional replication.

As you can see in this example, there’s three different locations and you have three different instances of Artifactory in three different locations. One in San Francisco, which by the way as you can see is self-hosted in a data center. And there are two more instances, one in London and one in Bangalore, India. Both of them are hosted on AWS regions, EU West and Mumbai agents. And as you can see, there’s a single repository called npm-local that is being used in all the three locations. And once this federation across all these three repos have been set up, what happens is whenever something new gets uploaded in the San Francisco instance, the same artifact and its metadata will be immediately replicated over to the other two locations.

Similarly, whenever something new gets uploaded to the London instance, in a similar fashion, the same artifact and its metadata will be replicated to both San Francisco as well as the Bangalore instance, and that is exactly how these three instances with respect to these three repositories are always in sync with each other so that if there is a team which is in the US, that team can very well interact with this San Francisco instance to get all the latest artifacts from a location which is close to wherever they are. Similarly, a team in India can very well interact with this Bangalore instance and get all the ready artifacts right from there instead of going all the way across the globe and accessing the San Francisco instance again, because of the distance between these two locations, there could be a possibility of some latency issues that could arise, and that is exactly how the performance is drastically improved and the builds go on much more reliably and much more quickly.

And this by the way scales very well, which is exactly why it is being used by most of our enterprise customers because most of them anyway have teams or offices across multiple countries, across multiple locations. And this is exactly how the federation of repositories concept will help all such teams in achieving all such use cases. Similarly, same thing can also be used for disaster recovery needs as well, wherein you can have this to be your main instance. And let’s say this can be your DR or your failover instance. So once the federation is set up, all the activities or all the uploads that are going on over here will be automatically replicated to the other instance so all the artifacts are readily available because the redundancy is brought in because of the replication.

And when and if the main instance goes down for whatever reason, you can immediately switch all your workloads or all your usage over to the failover instance, which is in a different location altogether, which is why it is still up and running, and it can simply go on to continue your builds with very, very minimal business impact, so to speak. So that is exactly how the concept of Repository Federation will help you in replicating artifacts across multiple locations. Now here’s another slide which talks about the same federated repositories in much more detail. Like I explained earlier on, it is basically another repo type on Artifactory, which will help different instances of Artifactory to stay in sync with each other all the time. And this also enables multi-site bidirectional replication, like I showed you in the slide. We had three different instances in three different locations. So that is multi-site and it is bidirectional. Like I said, whatever happens in San Francisco will be automatically brought over to other two and vice versa as well.

And it’s very easy and convenient to use. Why I say that is because this bidirectional replication is very much possible using the traditional push and pull types of replication as well. It’s just that you basically have to deal with more than one repo in each and every location. So as you keep on introducing more and more locations, you’re basically adding more and more repositories in each and every location, which obviously can get cumbersome, which is why the federated repositories will help you achieve the same and stay at same goal in a much easier and convenient format because you basically have to deal with just one repository at each and every location.

And here’s a couple of benefits that I’ve listed out. So enterprises can definitely collaboratively develop software across multiple locations. Like I said, this is exactly how JFrog platform with the usage of federated repos will help you scale your teams across multiple locations. And this is exactly how you can have synchronization going on for frequently uploaded binaries between locations. So let’s say your Team 1 keeps on pushing new updates to a particular binary, let’s say, by pushing new versions. So all these new versions or the new updates can very well be in sync across locations so that all the latest updates or the latest versions of any binary will be instantly made available across other locations where there are Artifactory federations that are going on. And this is exactly how you can scale your development and your development teams globally. And this is a very active trend that we see with many, many customers that we have right now.

And needless to say, all of them are very happy with the usage of federated repo because this helps them achieve this end state very, very easily. And this is exactly how we can use the same concept of Repository Federation for your DR or disaster recovery needs as well. Like I said in the previous slide, you can basically have instance one as your main instance, two as your DR instance. Federation will take care of making the artifact redundant across multiple locations so that you can switch to the failover instance if in case something happens to your main instance for whatever reason. That was a quick bit about Repository Federation. Now, Repository Federation definitely takes care of bringing redundancy for the artifact data or the metadata for artifacts as well. Now the other question that you might get is what about the different security entities? When I say security entities, I mean different users, groups of users, permissions that have been configured on each of these users or groups of users, API keys, access tokens, so on and so forth. What about them?

Why I see this is because from a platform admin’s perspective, when an admin sets up permissions for users or groups of users on a particular instance, all those permissions are valid only on that instance. So if the same company has multiple instances across multiple locations, all those permissions won’t be valid across all the other instances. It will still be valid on just that instance alone. That is scenario one. Scenario two is let’s say from an end user’s perspective or from a developer’s perspective, let’s say a developer is using a particular API key to interact with a particular instance. Now, again, similar to permissions, the API keys that are given to a particular user by a particular instance is valid only with that particular instance which gave the API keys in the first place. Now, if the same user tries to or wants to access another instance, the API keys that he has or she has is not valid because like I said, API keys are specific to the instance.

So which means when the particular user wants to interact with instance number two, the user basically needs to have another set of API keys which are given by the second instance, for example. So as the usage grows with different instances, the scale also grows with the different API keys that need to be managed from the end user’s perspective. So to help solve all these challenges or pain points, that is where we have something called Access Federation, which will, as the name says federate the access info. And when I say access info, I mean the security entities like users, groups, permissions, tokens, API keys, so on and so forth. So with the usage of Access Federation, admins can basically configure permissions on a single instance and then they can basically federate the same set of permissions across all the other instances that are part of the same federation.

Similarly, from the developer’s perspective, the same set of API keys can be made valid to be used with any of the other instances that are part of the same federation so that they don’t have to deal with multiple API keys when they want to deal with multiple instances of Artifactory which are in different locations. So that is exactly how Access Federation helps, which is basically to keep the security entities in sync across multiple sites. And it also enables management of security entities from a single place instead of having to reconfigure it again and again across multiple locations. So that is exactly how the Repository Federation will help in federating the artifacts as well as the metadata of artifacts. And the Access Federation will help you in federating the access info mainly with the security entities. So that is exactly how we can help you scale your DevOps across multiple sites. And that brings us to the end of today’s webinar. So thanks a lot. Would be happy to take any questions that you might have.

Sean Pratt:

Awesome. Thanks, Rakesh, for kind of walking us through how organizations can better scale without the hiccups and frustrations and detriments to productivity and efficiency across their development organization. We have had a couple of questions that have come in that I think would be good for you to take. And just a reminder for folks that you can go ahead and submit any questions as well. We have folks on the line to answer those and any that we don’t get to, we’ll follow up with you after the webinar for sure. So Rakesh, one question that’s come through is if an organization is using replication, how should they choose between a push or pull approach?

RAKESH KRISHNA:

That’s a great question, Sean. So again, push and pull basically will help them achieve the same end state, which is to bring artifacts over from one location to another. So difference is in the push type, when there is a single source and multiple targets, for example, since a push replication is being done, is being managed by the source, if there is multiple targets, obviously the source is the one that is doing all the heavy-lifting so that artifacts can be pushed not just to one target, but the multiple targets that have been set up. Whereas in the case of pull replication, pull replication is something that the targets do on their own behalf. So from the source perspective, the source can basically delegate or offload a lot of such individual replication responsibilities over to each one of the targets so that it doesn’t have to do all the heavy-lifting by itself.

Sean Pratt:

Great. Awesome. Thank you for that. A bit of a follow-up question. You had mentioned in the presentation that Artifactory supports over 30 different package types. Is replication available for all of those package types and all of those different package repositories?

RAKESH KRISHNA:

Yeah, absolutely. All the 30 types that we have that we support on Artifactory will definitely have replication so that whenever they have multiple instances across different sites, they can easily set up replication. And replication is something that can be set up at the repository level, not at the instance level, meaning let’s say a particular instance has hundreds of repositories. A user or a group of users might very well choose to set up replication only for 10 repositories, for example, so that only those 10 repositories will be replicated over to the other instance.

Sean Pratt:

Awesome. That’s great. So a lot of granular control in terms of what you’re sharing. So Rakesh, another question that’s come through is how are you seeing JFrog customers using kind of replication today?

RAKESH KRISHNA:

How are customers using replication? Yeah, it’s one of the very popular functionalities or features that is under usage, Sean. Like I said, early on during the presentation itself, we do have a lot of enterprise customers. In fact, we have most of the Fortune 500 companies who are our customers, and almost each one of them is using the replication functionality that we have because almost every one of them have teams across multiple sites, across multiple countries, across multiple locations. So some companies usually start small by having just one instance in one location, but as they scale, as their team scale, they immediately feel the need of having more instances across different sites. And that is when they make use of replication so that teams don’t have to come all the way across the globe to access something which is in a far away distance location.

Sean Pratt:

Gotcha.

RAKESH KRISHNA:

And same thing with the DR needs as well, because DR, again, is a very, very important factor for most of the enterprise level companies. They need to have a proper DR plan in place and as part of their DevOps tool set, DR option for Artifactory is basically to have another instance of Artifactory in a far away location or in another location basically. And so if main instance goes down or remains inaccessible for whatever reason, they’ll still have all the artifacts readily available on the failover instance somewhere in a safe location.

Sean Pratt:

Great, thanks for that. One final question, just because we’re getting close to our time here and our goal of keeping this around 30 minutes, what are the advantages of using federated repos over traditional replication? You had mentioned that you can kind of accomplish the same goals using the traditional replication, but federated repos make it a bit easier. Can you elaborate on that?

RAKESH KRISHNA:

Sure, sure. It’s a good question, Sean. So the traditional replication methods, that is the push and pull, by default there you need unidirectional, meaning if you’ve set up a replication, a push type of application between a source and a target, the source always remains a source, the target always remains the target. So in case of bidirectional replication, what needs to happen is you need to set up replication number two in which the target becomes the source and the source becomes the target. So you basically are dealing with two different repositories in each location.

So four in total, two on the source side, two on the target side. So the difference that federated repos bring in is all the federated repository replication is bidirectional by default, meaning which once a federation is set up across different repositories, across different locations, it is by default bidirectional, meaning you don’t have to deal with multiple repositories in each location. So if you have just one here and one in instance two, this is bidirectional meaning which any updates that happen here will be brought over to the other location and vice versa as well. So it basically makes things much more easier and convenient.

Sean Pratt:

Great. That makes a lot of sense. Less work, less things to maintain for whoever’s managing your instances and that sort of thing.

RAKESH KRISHNA:

Absolutely.

Sean Pratt:

Great. Awesome. Cool. So with that, we’re going to go ahead and wrap up the webinar. Rakesh, thank you so much for taking us through this in a really concise and expedient manner. And I want to thank everyone who joined us today. If you do have any questions, you can drop them last minute in the chat, or you can always reach out to us at JFrog. We’d be really happy to talk to you about your DevOps needs and how we can help make that smoother. So thanks again everyone, and we look forward to seeing you next time.

RAKESH KRISHNA:

Thanks everybody. See you.